The biggest mistake amateurs make with performance gadgets is trusting their expensive data blindly; the key is critical interpretation, not just collection.

- Device accuracy is highly context-dependent; a wrist-based heart rate monitor that’s fine for a steady run can be useless for high-intensity interval training.

- Generic “readiness” or “recovery” scores often fail because their algorithms can’t distinguish between training stress and life stress, leading to poor recommendations.

- Meaningful upgrades are driven by advances in core sensor technology, not by annual model releases with minor software features.

Recommendation: Prioritize learning to calibrate objective data with your own subjective feeling (like perceived exertion) over chasing the newest, most feature-packed device.

You bought the watch. You downloaded the app. You have a dashboard filled with gleaming charts showing your sleep stages, heart rate variability (HRV), and training load. The promise of wearable technology is seductive: for a few hundred dollars, you can access the kind of performance data once reserved for elite athletes in a lab. Yet, for many amateur athletes, this data firehose leads not to personal bests, but to confusion, anxiety, and a feeling of being enslaved by numbers. You followed a “poor” recovery score, skipped a workout you felt ready for, and then felt sluggish the next day. The data is there, but the results aren’t.

The common advice is often to simply “track everything” or “buy the latest model,” assuming that more data or newer features will automatically solve the problem. This approach misses the fundamental point. The issue isn’t a lack of information; it’s a lack of interpretation. These devices are powerful tools, but they are not infallible oracles. They are prone to measurement errors, and their generic algorithms can’t possibly understand the unique context of your life—a stressful day at work, poor nutrition, or mental fatigue.

What if the key to unlocking your potential wasn’t in getting more data, but in getting smarter about the data you have? The secret lies in moving beyond passive data collection and developing a critical filter. It’s about learning to distinguish the valuable “signal” from the distracting “noise.” This means understanding the limitations of your technology, calibrating its numbers against your body’s own feedback, and knowing when to trust your intuition over an algorithm.

This guide cuts through the marketing hype. We will critically examine the most common performance gadgets, exposing where they fail and revealing how to use them effectively. We will explore the fatal flaws in wrist-based heart rate monitors, the mistakes made by blindly following recovery scores, and the features that can genuinely help prevent overtraining. By the end, you’ll have a framework for turning your gadgets from noisy masters into quiet, effective servants that truly help you improve.

Summary: Which Performance Gadgets Actually Improve Metrics for Amateurs?

- Why wrist-based heart rate monitors fail during HIIT (and what to use instead)?

- How to use a power meter without becoming a slave to the numbers?

- Smart Watch vs. Chest Strap: Which is the better investment for accuracy?

- The recovery score mistake that leads to undertraining

- When to upgrade your tech: The 3-year cycle rule

- GPS Vests vs. Camera Tracking: Which is more accurate for indoor sports?

- Why generic “readiness scores” might misinterpret your specific fatigue?

- Which Training App Feature Prevents Over-Training in Amateurs?

Why wrist-based heart rate monitors fail during HIIT (and what to use instead)?

For steady-state cardio like jogging, your smartwatch’s optical heart rate (HR) sensor is generally adequate. But the moment you introduce High-Intensity Interval Training (HIIT), its reliability plummets. The technology, which uses LEDs to detect blood volume changes under the skin (photoplethysmography or PPG), is highly susceptible to errors from rapid arm movements, muscle flexing, and sweat interfering with the sensor. This creates a significant data lag and often misses the rapid peaks and troughs of your heart rate during short, intense efforts, rendering the data almost useless for precise interval training.

While some studies show that under specific conditions, the difference can seem small, with a −0.2 bpm bias between chest straps with ±2.5 bpm variance, this small variance is enough to misclassify your effort level during a 30-second sprint. The “signal” you need is precise and immediate, but what you get is smoothed-out, delayed “noise.” This can lead you to believe you’re not working hard enough during an interval or not recovering sufficiently between them.

The solution isn’t to abandon tracking, but to use the right tool for the job. For HIIT, a chest strap is non-negotiable for accuracy (more on that later). However, a more powerful long-term strategy is to develop your internal sense of effort through Metric-to-Feeling Calibration. This involves learning to pair your objective HR data with your subjective Rate of Perceived Exertion (RPE). By consciously rating your effort on a 1-10 scale during workouts and later comparing it to your accurate chest strap data, you train your brain to recognize what different HR zones *feel* like. Over time, you become less dependent on the screen and more in tune with your body.

A practical method to develop this internal awareness is the RPE-Based HIIT Calibration:

- Start with RPE: For two weeks, perform HIIT sessions using only the Rate of Perceived Exertion (RPE) scale. During each interval, rate your effort from 1 to 10 without looking at a monitor.

- Calibrate with Data: After the initial phase, add a chest strap monitor. Continue to focus on your RPE during the session.

- Post-Workout Review: After each workout, compare your perceived effort for each interval with the actual heart rate data. Note where your perception matched the data and where it differed.

- Refine and Repeat: Use this feedback to refine your internal scale. With practice, you’ll be able to hit your target training zones by feel alone, making the technology a verification tool rather than a crutch.

How to use a power meter without becoming a slave to the numbers?

The power meter is the gold standard for cyclists and runners, providing an objective, instantaneous measure of work output. Unlike heart rate, it’s unaffected by heat, caffeine, or stress. However, this beautiful, raw data is also a perfect trap for the amateur athlete, leading to a state of “data-slavery.” Riders become obsessed with hitting a specific wattage, their eyes glued to the bike computer, completely detached from the feeling of the ride. This kills the joy of training and, paradoxically, can hinder performance by stifling the intuitive sense of pacing and effort that is crucial for racing.

Breaking free from this digital prison requires a conscious strategy to put the numbers back in their place as a tool, not a master. This involves a deliberate practice of training by feel, using the power meter primarily for post-ride analysis and targeted workouts, rather than as a real-time report card. Covering your head unit display during certain rides is a powerful technique to force yourself to reconnect with your body’s signals—the feeling in your legs, your breathing rate, and your overall sense of exertion.

This image perfectly captures the essence of sensory calibration: reconnecting with the physical effort of training, using technology as a silent partner rather than a loud commander. One effective framework for managing this is the “Rule of Three” data management strategy, which helps prevent overwhelm while still leveraging the power of the data.

Case Study: The “Rule of Three” Data Management Strategy

Professional athletes using advanced wearable systems like Catapult have found success by strictly limiting their data focus to three distinct levels. During a session, they focus on only one real-time metric (e.g., pace or feel). For their post-session review, they analyze just two key metrics (e.g., average power and time in zone). Finally, they conduct a broader analysis of only three weekly trends (e.g., total training load, fatigue patterns, and performance improvements). This tiered approach improves training outcomes by preventing data overload and ensuring that focus remains on actionable insights.

Smart Watch vs. Chest Strap: Which is the better investment for accuracy?

This is the central battleground for heart rate accuracy, and the answer is unequivocal: for serious training, the chest strap is the superior investment. The difference lies in the fundamental technology. A smart watch uses optical PPG sensors, which are convenient but inherently flawed for high-intensity activity. They are essentially guessing your heart rate based on reflected light, a process easily disrupted by wrist movement, skin tone, tattoos, and the sensor shifting its position.

A chest strap, on the other hand, functions like a simplified electrocardiogram (ECG). It measures the tiny electrical signals generated by your heart as it contracts. This method is direct, incredibly precise, and virtually immune to the motion artifacts that plague wrist-based sensors. When you’re performing a 400-meter sprint or a heavy set of squats, the chest strap will capture the instantaneous spike in your heart rate, while your watch may still be trying to figure out what happened ten seconds later.

So, is a smart watch a bad investment? Not necessarily. Its value lies in convenience and 24/7 trend monitoring. It’s excellent for tracking resting heart rate, sleep patterns, and general daily activity—all useful data points for overall health. Think of it as a wide-angle lens, giving you a broad overview of your health landscape. The chest strap is your telephoto lens: you don’t use it all the time, but when you need sharp, precise detail during the most critical moments (your workouts), it is the only tool for the job. For an amateur athlete serious about performance, the best-case scenario is using both: the watch for all-day context and the strap for workout-specific precision.

The recovery score mistake that leads to undertraining

One of the most popular features on modern wearables is the “recovery” or “readiness” score. Each morning, it gives you a number, often from 1-100, advising you on how prepared your body is to handle training stress. The mistake many amateurs make is treating this score as an absolute truth. When the watch says “Recovery: 35% – Take a rest day,” they dutifully comply, even if they feel energetic and motivated. This can be a direct path to undertraining, where you consistently fail to apply enough stimulus to drive adaptation and improvement.

The problem is what I call “Algorithmic Blindness.” Your watch’s algorithm is looking at a few key physiological metrics, primarily Heart Rate Variability (HRV) and resting heart rate. While it’s true that, generally, higher HRV is associated with better adaptability to stress, the algorithm has a critical blind spot: it cannot distinguish between different types of stress. It doesn’t know if your low HRV is from a brutal leg day yesterday (physical stress) or from a terrible presentation at work followed by a fight with your spouse (mental/emotional stress). Training through the latter is often beneficial, while training through the former might be detrimental.

Blindly following a generic score that lacks this crucial context can cause you to miss valuable training windows. The solution is to use the score as a single data point, not a directive. You must learn to overlay it with your own subjective feedback. A simple but effective method is the “30-Day Subjective Override Protocol,” which helps you calibrate the device’s score against your real-world feelings and performance.

The 30-Day Subjective Override Protocol:

- Days 1-7: Each morning, record your device’s recovery score and, separately, your subjective feeling of readiness on a 1-10 scale.

- Days 8-14: Pay close attention to discrepancies. Note the days when the device’s score is low but you perform well in your workout, and vice versa.

- Days 15-21: Start identifying patterns. Does your device consistently underestimate your readiness after a day of high mental stress? Does it overestimate it after a night of poor but sufficient sleep?

- Days 22-30: Create your own personal adjustment factors. For example, “Subtract 10 points from the score after a night of bad sleep,” or “Add 10 points if I feel mentally refreshed despite a low score.”

- Ongoing: Trust your calibrated intuition. When there is a significant discrepancy between the score and how you feel, your subjective assessment should win.

When to upgrade your tech: The 3-year cycle rule

The tech world thrives on annual upgrade cycles, and the fitness gadget market is no different. Every year brings a new watch or computer with a slightly brighter screen, a new software feature, or a different color. For the amateur athlete seeking real performance gains, falling for this marketing-driven hype is a costly mistake. The truth is, the most significant leaps in performance tracking don’t happen annually; they are tied to fundamental improvements in the underlying hardware, particularly the sensors. This is the “Sensor-First Upgrade” principle.

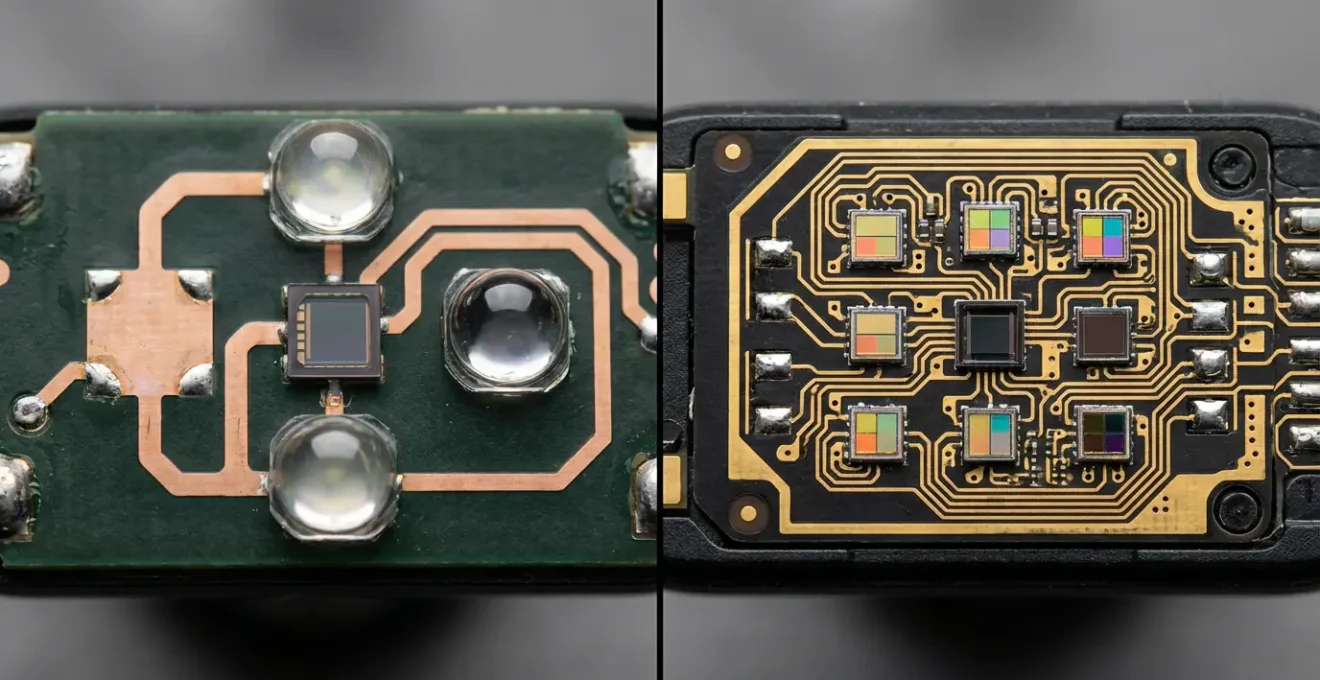

A good rule of thumb is to operate on a 3-year cycle. This is typically the timeframe in which meaningful sensor advancements occur. Upgrading from a watch with a 2-LED optical sensor to one with a 6-LED array and new wavelengths is a genuine step up in potential accuracy and reliability. Upgrading from this year’s model to next year’s, which has the exact same sensor package but adds a “mindfulness” app, is not. Before any upgrade, your primary question should be: “Has the core sensor technology for the metrics I care about fundamentally changed?”

The visual difference between an older, simpler sensor and a modern, complex one highlights where true innovation lies. It’s in the hardware that captures the raw data, not just the software that interprets it. Before pulling the trigger on a new device, apply a critical filter to ensure the upgrade is functional, not just fashionable.

Case Study: Sensor Generation vs. Model Year Analysis

In the world of professional sports, teams using wearables have found that upgrades providing revolutionary improvements are almost always tied to new sensor generations. For instance, an upgrade from a simple GPS tracker to a device incorporating advanced accelerometers and gyroscopes unlocked new insights into player movement and impact forces. In contrast, simple model-year updates that kept the same core sensors provided only minimal gains in data quality, proving that upgrade decisions should be driven by technological evolution, not marketing calendars.

GPS Vests vs. Camera Tracking: Which is more accurate for indoor sports?

For field sports like soccer or rugby, GPS vests are a fantastic tool for tracking distance, speed, and player positioning. However, the moment you move indoors for sports like basketball, volleyball, or futsal, their utility evaporates. GPS technology relies on a clear signal from multiple satellites, which is blocked by a roof. A GPS vest worn indoors will produce wildly inaccurate, often nonsensical, data about your movement. It simply isn’t the right tool for the environment.

The professional solution for indoor tracking is multi-camera optical systems. These setups use multiple cameras placed around the court to track player and ball movement with incredible precision. They can measure not just speed and distance, but also relative positioning between players, jump height, and court coverage. The accuracy is excellent, but the cost and complexity are prohibitive for any amateur, often running into tens of thousands of dollars and requiring professional installation and calibration.

So, what’s the practical alternative for the amateur indoor athlete? It’s about going back to basics and using a combination of simpler, more affordable tools. For effort, a chest strap heart rate monitor is perfect. For tactical analysis, simple phone video analysis is surprisingly powerful. And for overall session load, the Session RPE (sRPE) method—multiplying your workout duration in minutes by your RPE score (1-10)—provides a scientifically validated measure of training stress, completely free of charge. As the following table shows, the pro-level solution isn’t always the practical one.

This comparative analysis, drawn from systems used at the elite level, clearly shows the trade-offs. While camera tracking is the gold standard for indoor accuracy, its cost makes it irrelevant for amateurs. A combination of simpler tools provides a far more practical solution.

| Feature | GPS Vests | Camera Tracking | Practical Alternative |

|---|---|---|---|

| Indoor Accuracy | Poor (GPS signal lost) | Excellent | Session RPE |

| Cost for Amateurs | $300-500 | $10,000+ | Free-$20 |

| Relative Positioning | Not Available | Excellent | Phone video analysis |

| Effort Metrics | Good (accelerometer) | Limited | Heart rate monitor |

| Setup Complexity | Simple | Professional required | Minimal |

Why generic ‘readiness scores’ might misinterpret your specific fatigue?

As we touched on earlier, a generic readiness score can be misleading. Now, let’s dive deeper into why it often fails to capture the nuance of your specific fatigue. These scores are essentially a blend of several data streams, providing a single, simplified output. As experts from Men’s Health UK note, it’s a calculated guess based on a few key inputs.

Readiness scores are generated using sleep quality, HRV, activity, temperature, and resting heart rate, generally served each morning with guidance on whether to rest or train.

– Men’s Health UK Fitness Experts, The Best Fitness Gadgets for Tracking Your Health

The core problem is that this “blend” is generic. The algorithm doesn’t know you. It doesn’t know that you’re an endurance runner whose muscular system is fried but whose central nervous system (CNS) is fine, or a weightlifter whose CNS is fatigued but whose muscles feel fresh. It can’t differentiate between the deep, systemic fatigue from a heavy deadlift session and the localized muscular soreness from a long, easy run. It treats all stress as equal, which is a fundamental flaw.

Furthermore, your individual response to stress is unique. Some athletes thrive on high training loads and have a naturally lower HRV, while others need more recovery and have a higher baseline. A generic algorithm, designed for the “average” user, may consistently misinterpret your personal data signature. This isn’t to say all algorithms are useless. In highly controlled environments with specific athlete populations, automated algorithms show a remarkable 100% detection rate with 97.8% agreement with expert analysis for certain conditions. However, the algorithm in your consumer-grade watch is not this. It’s a blunt instrument trying to perform a surgical task. Relying on it without critical self-assessment means you’re letting a crude approximation dictate your sophisticated training needs.

Key Takeaways

- Context Is King: A device’s accuracy is not absolute. It depends entirely on the activity you’re doing. A wrist HRM is fine for a walk but fails during HIIT.

- Calibrate Feeling with Fact: The most powerful skill is to use objective data (from a chest strap) to train your subjective perception (RPE), making you less dependent on technology.

- Prioritize Sensor Tech: When considering an upgrade, ignore marketing and focus on whether the core sensor hardware has been meaningfully improved. This usually happens on a 2-3 year cycle.

Which Training App Feature Prevents Over-Training in Amateurs?

The single most effective feature in a training app to prevent overtraining in amateurs is one that tracks cumulative training load over time. While many apps focus on single-session metrics, the real danger of overtraining comes from a slow, creeping accumulation of stress that goes unnoticed day-to-day. Features that track metrics like Chronic Training Load (CTL) or Acute:Chronic Workload Ratio (ACWR) are designed specifically to spot these dangerous trends before they lead to burnout, illness, or injury.

Chronic Training Load (CTL), popularized by platforms like TrainingPeaks, represents your fitness level based on a rolling average of your training stress over the last 42 days. A rising CTL indicates your fitness is increasing. However, if it rises too quickly, it’s a massive red flag for overtraining. The key is not just to track the number, but to monitor its rate of change and compare it against your subjective feelings of fatigue, mood, and soreness. This provides an objective guardrail against doing too much, too soon.

This feature transforms your app from a simple logbook into a proactive fatigue management system. It helps you make informed decisions about when to push harder and, more importantly, when to back off. Instead of guessing, you have a data-driven system to guide your training progression safely. The following protocol outlines how to implement this in practice.

Action Plan: CTL Ceiling Monitoring Protocol

- Setup: Activate Chronic Training Load (CTL) or an equivalent long-term load metric in your training app (e.g., TrainingPeaks, Strava’s “Fitness,” intervals.icu).

- Monitor Weekly Rise: Track the weekly increase in your CTL score. A jump of more than 5-8 points per week is a warning sign that your training load is ramping up too aggressively.

- Track TSS Compliance: Monitor your compliance with planned Training Stress Scores (TSS) for each workout. If you are consistently overshooting your targets, it’s a clear indicator that your intensity is too high.

- Log Subjective Feelings: Every day, log your subjective feelings alongside the metrics. Use a simple 1-10 scale for mood, muscle soreness, and overall energy levels.

- Review and Adjust: Once a week, review the patterns. If your CTL is rising sharply but your subjective scores (mood, energy) are declining, it is a definitive signal to immediately reduce your training load for the upcoming week.

Start today by choosing one metric you collect—be it heart rate, power, or even your readiness score—and begin to critically analyze it instead of just passively observing it. Compare it to how you feel. Question its accuracy in different contexts. That is the first real step toward making your technology work for you, not the other way around.